How personal growth into object-level AI safety research

It surprises me that the ideas I learned from being depressed turned into object-level AI safety research.

But it seems like it's working? For example, I'm running a 5-day workshop on the topic next month, and I just ran a 3-day workshop on the topic last month.

My topic is boundaries.

Backstory

For half of 2022, I was pretty depressed. My best explanation for why I was that being depressed was a way for me to avoid social interaction when it felt unsafe. One of my largest fears back then was that if I interacted with other people, they would be able to control me. For example, that they would be able to make me feel bad in ways I couldn't resist. So social interaction felt unsafe.

At the same time, I was also trying to control other people to make them like me. But it wasn't working in the way I expected and it was making me very confused and I was suffering about it.

I spoke to a skilled counselor about this, and I realized that I was misunderstanding the natural boundaries between people. I realized that I cannot actually unilaterally control other people, and they cannot unilaterally control me either. There are natural boundaries!

But as I began to learn more about boundaries, I became frustrated with the way other people spoke about them. The way many people talk about boundaries seems really inconsistent to me. Most of the time I heard people say the word "boundaries" it seemed to me like they really meant "preferences".

So I tried to develop my own logically consistent understanding of psychological boundaries instead.

After a few months (exactly a year ago at the time of writing) I had some conclusions about psychological boundaries, and I was explaining them to a friend (@Ulisse Mini). And he's an AI safety researcher, so I joked, "And, hey, maybe all of this boundaries stuff applies to AI safety, too. Just have AI respect the natural boundaries and that's safety, right? Haha…"

And he said, "No yeah, Andrew Critch already wrote a series about that."

I read the series.

And I had never wanted to work in ""AI Safety"" before, but boundaries seemed like a cool way to understand the world better, so sure why not. I didn't have funding, and I was still unemployed as I had mostly been for the past year. From there, I wrote the boundaries compilation post, created the boundaries/membranes tag on LW, went to AI safety workshops and spoke to a bunch of people about boundaries…

(In the beginning, I'm pretty sure a bunch of the people I spoke to thought I was crazy. Back then I would explain AI safety boundaries by the symmetries with psychology.)

Anyways, I wrote a bunch more posts1; planned, got funding for, and ran a 3-day workshop on boundaries in AI safety (https://formalizingboundaries.ai/), got personal research funding, wrote more things, got more funding, and I'm about to run a 5-day Mathematical Boundaries Workshop next month.

As it turns out, understanding the boundaries (more precisely: the causal distance) between agents in psychology seems to be very useful for understanding the boundaries between humans and AIs!

A bunch of people are excited about boundaries now, too. But this definitely wasn't the case when I first started.

Ways in which AI safety boundaries and psychological boundaries are similar

Here are a few ways in which I have similar intuitions for boundaries in both AI safety boundaries and psychology.

Minimizing conflict between agents

I was first interested in psychological boundaries because of my depression thing, but then potentially as a more general solution to social conflict. I noticed that many social conflicts seemed to reduce to "one person is trying to control another and expecting it to work" or "one person is expecting another person to be able to control them, and the first person could resist but isn't". But this is just misunderstanding natural boundaries. People can't actually control each other like this.

At the time, I thought that if people could just understand natural boundaries better, then this would reduce a lot of conflict. So if I could just figure out the correct understanding of natural boundaries and then teach others that, then they'd have a lot less conflict.

(Later I realized that being good at psychological boundaries has little to do with consciously understanding boundaries and actually requires something deeper, but that's beyond the scope of this post and not that related to AI.)

I took this same intuition to AI safety. "Safety" seemed like absence of conflict. For example, AIs controlling humans or dissolving the boundaries of humans. That would be bad. So maybe just… preserve the boundaries instead? That intuitively feels like it gets at most of what "safety" is (albeit not full alignment).

I think minimizing social conflict turns out to be very similar to minimizing AI un-safety.

Distinct from preferences

Boundaries in both AI safety and psychology are a distinct concept from preferences/desires.

Andrew Critch, «Boundaries» Sequence, Part 3b:

my goal is to treat boundaries as more fundamental than preferences, rather than as merely a feature of them. In other words, I think boundaries are probably better able to carve reality at the joints than either preferences or utility functions, for the purpose of creating a good working relationship between humanity and AI technology

In AI safety, I think it's much easier to talk about boundaries than preferences because true boundaries don't really contradict between individuals (humans or AIs).

I found something similar thing in my psychological research. (What I consider to be) the real boundaries are distinct from preferences.

(That said, some fraction of the time when people say "I'm setting a boundary: […]", I think they really mean "this is my preference".)

Markov blankets

I model both AI safety boundaries and psychological boundaries by thinking about Markov blankets / causal distance / causal bottlenecks.

When I explain AI safety boundaries on a technical level, the main formalism I refer to is Markov blankets.

Meanwhile, the way that I usually explain psychological boundaries is "You (the person) are a bundle of communication bandwidth. You have far more causal influence / mutual information with your arm and mind than anyone else, and you have less causal influence / mutual information with everything else and everyone else in the world…"2

And that's basically equivalent to saying, "It is useful for you (as an individual) to model the Markov blanket that exists between you and everyone else."

Personal development → AI safety research pipeline

So that's outgrowing my depression turned into object-level AI safety insight.

Going forward, I'm hoping to once again turn insights in my personal life into AI safety intuitions.

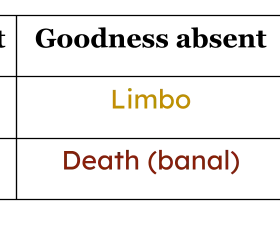

Basically, I see boundaries helpful for "formalizing badness". Anything that dissolves or violates an important membrane is bad.

(I don't think boundaries formalize all of badness, but I think they get a good chunk.)

My rough hope is that if "badness" can be formally specified, then "the absence of badness" can be written a as a spec for provably safe AI. (This is how I interpret davidad's plan. Also see this post and this tag.)

But, what is Goodness? What would be necessary for the positive components of alignment?

For example, in my personal life I've learned how to minimize the conflict I have with others (via boundaries), and I think I've gotten really good at that. But, for example, I feel like I haven't yet fully learned how to connect with people and make positive things happen. Similarly, I'm no longer be depressed or anxious, but I also haven't totally figured out joy yet.

I have the intuition that if I figured out positive things in my personal life then I would make progress on formalizing goodness more generally.

So that's the story of my last year. Please reach out if you're a woo AI alignment funder.

Thanks to Alex Zhu for support and encouragement since the beginning.

Update:

Social interaction-inspired AI alignment

Conjecture: Understanding the psychology of human social interaction will help with AI alignment.

though I later deleted the bad posts

Fun fact: I recently shared this definition with Frank Yang (enlightened guy on youtube) and he already agreed with me.